When I wrote Popeye’s Spinach, it all came from within. There was no editorial plan, no pre-designed structure - there was a real experience that needed to come out. I talked about how AI hadn’t given me new capabilities, but had unlocked the ones that were always there. The expressive muscle that had been underused for decades because I was thinking faster than I could write.

Today I’m back with the second part, after completing the AI Fluency course published by Anthropic Academy. That’s where I found the scientific framework that gave a name to what I was already doing.

The discovery

When I mention “extremely curious” in my bio, I mean it literally. And in that same spirit of curiosity - browsing the course catalog at Anthropic Academy - I found answers to questions I hadn’t even consciously formulated.

I found an academic framework that describes exactly what I was doing. Not because I read it first and applied it afterwards. The other way around: I was already living it, and they gave it a name.

The AI Fluency Framework

The AI Fluency Framework was developed by two academics:

- Rick Dakan - Professor of Creative Writing and AI Coordinator at Ringling College of Art and Design, Florida.

- Joseph Feller - Professor of Information Systems and Digital Transformation at Cork University Business School, University College Cork, Ireland.

The framework emerged from a research collaboration exploring the intersections between AI, creativity, innovation, and learning. It’s not purely theoretical - it has been applied in university courses in both Florida and Ireland since the 2023/2024 academic year.

The central definition is powerful in its simplicity:

AI Fluency is the ability to work effectively, efficiently, ethically, and safely within emerging modalities of Human-AI interaction.

Pay attention to those four words: effectively, efficiently, ethically, and safely. It’s not just about “knowing how to use Claude/ChatGPT/Gemini.” It’s about knowing when to use it, how to use it, and taking responsibility for what we do with the results.

The three modalities

The framework identifies three ways humans interact with AI:

Automation - AI performs tasks that you define. You give the instruction, AI executes it. Think about drafting an email, summarizing a document, converting a file format. It’s useful, it’s efficient, but the human only participates at the beginning and at the end.

Augmentation - AI and the human work together iteratively. It’s not “do this for me” and done. It’s a back-and-forth: you bring the vision, AI helps you organize it, you correct, AI adjusts, and so on until you reach the result. This is the mode I predominantly use. When I write an article for this blog, the thesis is mine, the arguments are mine, the examples are mine - but AI helps me externalize them, give them structure, take them from thought to paper.

Agency - The human configures AI to act independently in the future. You don’t tell it what to do now, but how to behave later. Think of a chatbot you design to serve customers, or an agent that automatically generates reports. This modality requires a deeper understanding of AI’s capabilities and limitations.

What’s interesting is that these modalities aren’t separate boxes. In practice, we move between them even within a single project.

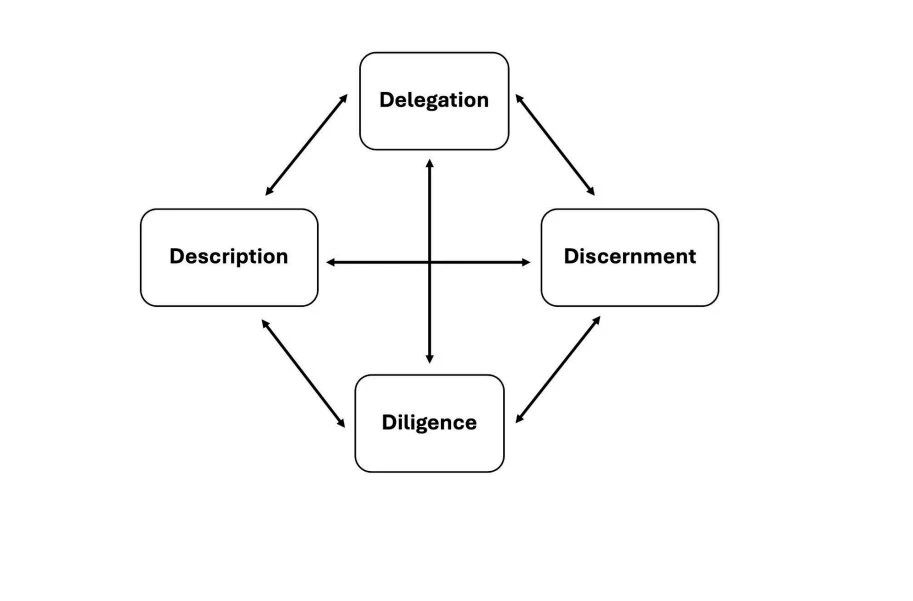

The 4 D’s: The heart of the Framework

This is where the framework comes alive. Four human competencies - not AI competencies, human ones - that determine whether your interaction with AI will be productive or a waste of time.

The framework presents these competencies as four interconnected nodes: Delegation at the top, Description on the left, Discernment on the right, and Diligence at the bottom - all connected to each other with bidirectional arrows. It’s not a linear process; it’s a dynamic cycle where each competency feeds the others.

Fig. 1 - Core AI Competencies (Dakan & Feller, 2025)

Fig. 1 - Core AI Competencies (Dakan & Feller, 2025)

Delegation - Knowing what to delegate to AI and what not to

Not everything gets delegated to AI. Delegating well means understanding what AI can do better than you, what you can do better than AI, and where working together produces the best result. It means knowing your tools - using an LLM to analyze a contract is not the same as using it to generate an image. Each tool has strengths and limitations.

Practical example: If I need to analyze a 50-page technical document, I delegate the extraction and structuring of information to AI. But the interpretation, the judgment about what matters and what doesn’t, the strategic decisions - I retain that. AI organizes; I decide.

Description - Knowing how to communicate what you want

AI doesn’t read minds. If you can’t describe what you need - the expected result, the format, the tone, the constraints - you’ll get something generic. Description isn’t just about “prompting well.” It’s about articulating your vision clearly enough for AI to execute it, and knowing how to guide the process iteratively when the first result isn’t quite what you were looking for.

Practical example: When I work on an article for this blog, I don’t tell AI “write me an article about X.” I provide the thesis, the central argument, the examples I want to include, the philosophical framework, the tone. And then I correct each iteration: this section sounds too generic, this one doesn’t reflect my voice, this one is missing the source link. The final article goes through four or five rounds before I say “this is what I wanted to say.”

Discernment - Evaluating what AI gives you back

This is perhaps the most critical competency. Language models are designed to generate responses that sound good - to please the user. This is no secret. AI will always give you something that sounds coherent, articulate, and convincing. That’s why discernment has to be sharp: it’s not enough to read the output and say “sounds good.” You have to ask yourself whether it’s correct, whether it’s authentic, whether it reflects what you actually wanted to say.

Practical example: If AI generates a paragraph that sounds impeccable but uses a clinical tone that isn’t my voice, I delete it. I don’t edit it - I delete it. If it introduces a section with terminology I wouldn’t use, out it goes. Discernment isn’t just about detecting factual errors; it’s about detecting when the output sounds polished but isn’t authentically yours. AI will always try to please you. Your job is to not settle for the flattery.

Diligence - Taking responsibility for what you produce with AI

If you publish something created with AI assistance, it’s yours. The responsibility doesn’t belong to the model - it belongs to you. Diligence covers three areas: using AI ethically (respecting copyright, detecting biases), being transparent about how you used it, and verifying everything before publishing.

Practical example: Every article I publish on this blog carries an ethics and authorship note. Not because it’s required - because I believe in transparency. If I used AI to shape the article, I say so. If the thesis, arguments, and experience are mine, I say that too. The reader deserves to know what they’re reading.

What makes this Framework special

This framework has three characteristics that make it particularly valuable:

- Platform agnostic - It doesn’t matter if you use Claude, ChatGPT, Copilot, Gemini, or any other LLM. The 4 D’s apply equally. The framework doesn’t tie you to a tool; it teaches you to think about how you use any of them.

- Ethics-centered - Ethics isn’t an appendix at the end. It’s a fundamental part of every competency, especially Diligence. Using AI responsibly is as important as using it efficiently.

- Flexible - It doesn’t prescribe rigid processes. It describes effective action and adapts to any professional context. Whether you’re a designer, engineer, writer, or IT consultant with 33 years of experience.

Your turn: The exercise and the course

I did this exercise. I asked my trusted LLM to evaluate me according to the 4D Framework, sharing the Framework PDF and my interaction patterns. The result was revealing - not because of the praise, but because of the clarity of seeing which dimensions I’m strong in and where my natural growth frontiers lie.

I want to invite you to do the same. Open your trusted LLM - whichever one you use regularly - and ask it to evaluate you according to the 4D AI Fluency Framework. Share the Framework PDF with your LLM, explain how you use it, and ask for an honest evaluation.

Don’t look for praise. Look for clarity. Which D are you strong in? Which one do you need to work on? Are you using AI mostly in Automation mode when you could be in Augmentation?

The goal isn’t to get a grade. It’s to understand where you are and where you can grow.

And if you want to go beyond the exercise, I invite you to take the full AI Fluency: Framework & Foundations course at Anthropic Academy. It’s free, well-structured, and gives you the complete context of the framework I’ve summarized here.

Conclusion

When I wrote Popeye’s Spinach, I didn’t know this framework. Today I do, and looking at it I feel it validates something I knew instinctively: that AI is a powerful tool, but only if the human using it has the vision, the judgment, the descriptive capacity, and the responsibility to use it well.

Spinach didn’t give Popeye his muscles. The muscles were already there. Spinach activated them.

The same goes for AI. It won’t make you smarter than you are. But if you already have the knowledge, the experience, and the curiosity - AI helps you amplify it.

The 4D Framework is the map. But you walk the path.

Sources:

- AI Fluency Framework (v1.1, 2025): Download PDF

- Anthropic Academy Course: AI Fluency: Framework & Foundations

- Framework Page: Ringling LibGuides

- License: CC BY-NC-ND 4.0

Cesar Rosa Polanco - Written from real experience, with AI assistance as a cognitive amplification tool.